Your AI Tools Are Working. Your Ownership Model Is Not.

Teams frustrated with AI ROI share a common pattern. The tools are working. The work is getting done faster. The business outcomes have not changed. That is not a tools problem.

Every team frustrated with AI ROI shares a pattern. The tools work. Tickets close faster. Engineers feel more productive. And the business outcomes have not materially changed.

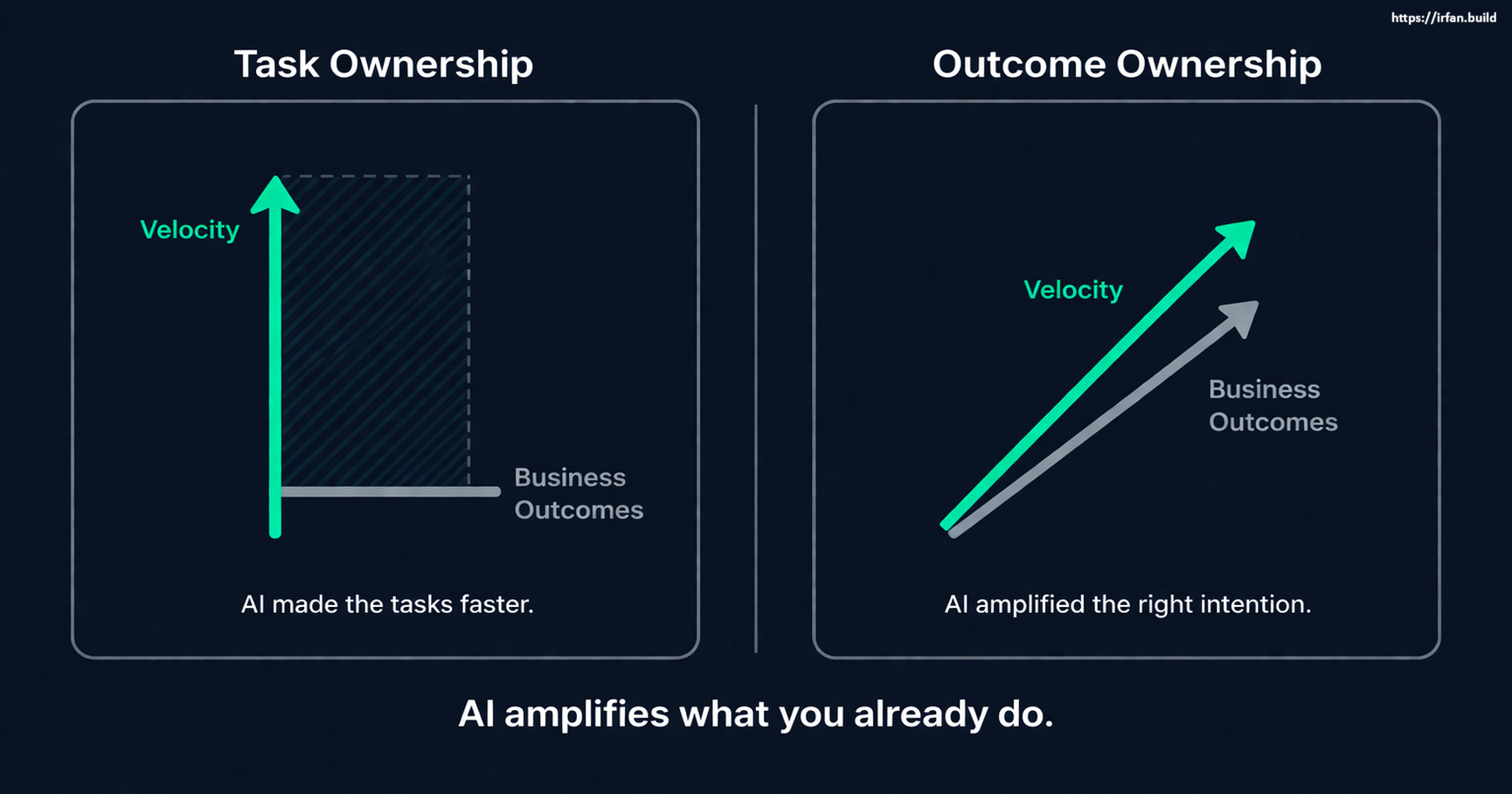

This is not a tools problem. AI is working exactly as designed. It is amplifying what the team already does. When teams own tasks, AI makes them faster at tasks. When the business outcome does not improve, the problem is not what tools are being used. It is what those tools are amplifying.

The ROI Gap Is Not a Tool Problem

The standard response to poor AI ROI is more tooling: better prompts, more experimentation, different models, workflow changes. None of this is wrong. None of it is the problem.

The teams where AI has genuinely moved business metrics share one characteristic: someone owned the outcome before the sprint started. They defined what success looked like in terms the business cared about. They held the spec. They made the call when scope tried to expand. When the sprint ended, they could say whether the thing they built actually solved what they thought it would solve.

Teams where AI made no business difference also share a characteristic: work was assigned in tasks. Engineers owned the implementation. PMs owned the requirements document. QA owned the release criteria. Nobody owned the outcome. Accountability was distributed across handoffs, and at every handoff, the question of whether this was the right thing to build was someone else's problem.

Upgrading the tools in that structure produces faster handoffs. It does not produce better outcomes.

AI Amplifies What You Already Do

The mechanism is straightforward. AI reduces the friction between an intention and its execution. If the intention is to close the ticket, AI helps close the ticket faster. If the intention is to solve the problem, AI helps get to the solution faster.

The intention is yours. AI does not supply it.

This is why the "more tooling" response fails. Better tools reduce friction on whatever intention the team already has. When the intention is task completion, better tools produce faster task completion. They do not produce better outcomes, because outcomes were never the unit of work.

You are not running a tooling problem. You are running an intention problem. And models do not fix that.

What "Owning an Outcome" Actually Means

Outcome ownership is not a mindset. It is a structural position.

An engineer who owns a task is accountable for reading the spec, implementing it, and raising a PR that passes review. Accountability ends at the pull request.

An engineer who owns an outcome is accountable for understanding the problem the ticket is trying to solve, building something that actually solves it, and knowing whether the thing they shipped worked. Accountability ends when the metric moves, or does not.

These are structurally different positions. The first produces predictable handoffs. The second produces business-relevant results. The first fits cleanly into sprint planning. The second does not fit neatly into story points.

Software teams organized around the first model for 25 years because execution was expensive. When you are rationing human coding capacity, clean handoffs and clear accountability boundaries make sense. Task-based ownership scaled. Outcome ownership did not.

When execution gets cheap, the model inverts. A team where five people each own a slice of the problem is slower than two people who each own an outcome end to end and use AI to execute every part of it.

What AI-Amplified Task Ownership Looks Like

You can see the gap in how engineers use the tools.

Task ownership shows up as: generate the implementation, review it, ship it. The intent is completing the ticket. AI is a faster implementation layer. Output increases. Velocity metrics improve. Ticket count goes up.

And then the sprint review happens. The PM says velocity was up. The engineering lead confirms commitments were hit. And the product question: did this move the metric we were trying to move? goes unanswered, because nobody was accountable for it.

The symptoms are consistent: high tool adoption, rising productivity reports, flat business metrics. The diagnosis is always the same. Teams organized around task completion used AI to complete tasks faster. That is exactly what AI did. It worked as intended.

What AI-Amplified Outcome Ownership Looks Like

Outcome ownership with AI starts with a problem statement, not a ticket.

What problem are we solving? What does success look like in a way we can measure? What would tell us we were wrong?

The engineer uses AI to close the distance between the problem and a working solution. They generate the implementation, test it against real conditions, check whether it is doing what they intended, and adjust. They are not submitting work for review. They are validating a hypothesis.

The sprint does not end with "we closed 24 tickets." It ends with "this metric moved, this one did not, and here is what we are doing next based on what we learned."

Teams that operate this way produce less code per sprint. They close fewer tickets. And they move business metrics faster, because every sprint is anchored to an outcome that someone is accountable for.

The Question That Surfaces the Problem

If you want to diagnose where the ownership problem lives in your organization, there is one question that surfaces it quickly.

Pick any feature shipped in the last sprint. Ask: who is accountable for whether this feature achieved the outcome it was built to achieve?

If the answer is "the PM owns the requirements, the engineers own the implementation," the accountability is distributed across a handoff. Nobody owns the outcome.

If the answer is a name, and that person can tell you what the outcome was and whether it was achieved, the accountability is real.

AI will not fix a team organized around task ownership. It will accelerate it.

The question for any engineering leader frustrated with AI ROI is not which tools to adopt. It is who owns the outcome, not the ticket. If the honest answer is nobody, no model upgrade will solve it.

I help engineering teams close the gap between "we use AI tools" and "AI actually changed how we deliver." Book a 20-minute call and I'll tell you where the leverage is.

Working on something similar?

I work with founders and engineering leaders who want to close the gap between what their technology can do and what it's actually delivering.