AI Didn't Change Software Engineering. It Exposed It.

For 30 years, writing code was the bottleneck in software. AI removed it. Now teams can see which engineers had judgment, and which had execution.

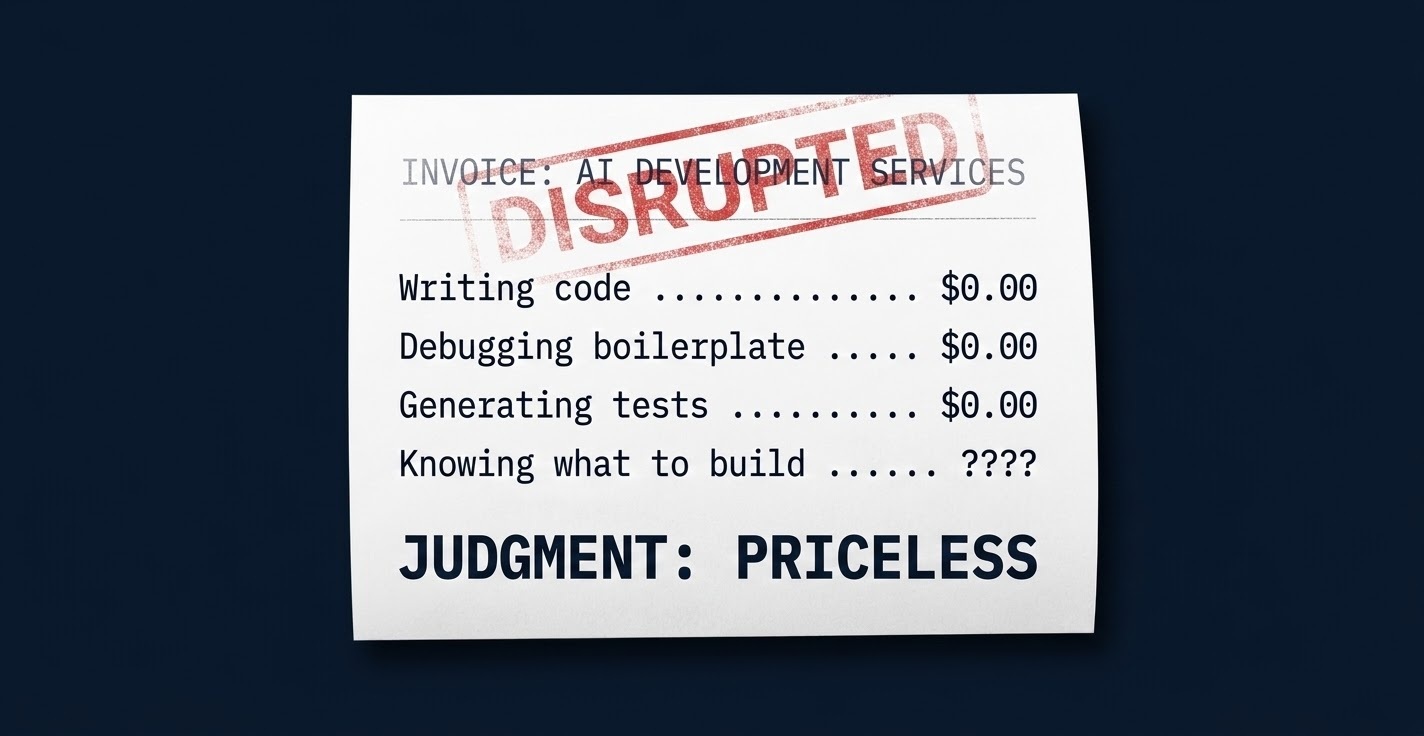

For 30 years, writing code was the bottleneck in software engineering. That scarcity created a useful illusion: execution looked like the valuable thing, so we hired for it, measured it, and rewarded it. AI is removing that bottleneck now, and in doing so, it is revealing that execution was never the real value. The real value was always judgment. We just could not separate them until the price of one collapsed toward zero.

When Execution Was Scarce, It Hid Everything Else

For most of software's history, writing working code was genuinely hard. Not hard in the way that solving a novel mathematical theorem is hard. Hard in the sense of being skilled, time-consuming work that most people could not do well or quickly. That scarcity meant that to build software, you had to hire people who could execute. There was no other option.

The problem was that execution and judgment came bundled in the same hire. The best engineers you have ever worked with were not remarkable because they wrote fast, clean code. They were remarkable because they knew which problem to solve, which abstraction would hold up over time, which feature would quietly break something else in three months. Their judgment was their real value. But because they also happened to write excellent code, you could not separate the two.

So the industry hired for execution. If someone could pass the coding interview, build the feature, and close the ticket, they were a software engineer. Whether they also had judgment was something you found out later, usually through the quality of the systems they built over time, and occasionally through the quality of the systems they destroyed.

This was a reasonable approximation. When execution was the constraint, the people who could execute fast tended to be the people who had also spent enough time in the craft to develop judgment. The two qualities correlated imperfectly, but well enough that optimizing for the proxy worked. Your team shipped software. The illusion held.

Then execution got cheap.

AI Separated Execution from Judgment. Now You Can See Both.

The data on what is actually happening is fairly clear. Anthropic's analysis of more than 500,000 coding interactions found that 79 percent of them were automation-oriented, not simple augmentation. These were not engineers using AI as a faster autocomplete. They were engineers delegating entire tasks, entire functions, entire modules to an agent, then reviewing the output.

This shift in how work gets done has a predictable downstream effect on hiring. The same research found early evidence of slower hiring for workers aged 22 to 25 in software roles with high AI exposure. Not mass unemployment. Compression. The work that used to justify three junior engineers is now being handled by one engineer and a set of agents running in parallel.

What this means is that execution is decoupling from employment in software, not in some dramatic future sense, but quietly, in the composition of job reqs and team headcounts right now. The engineers who are thriving in this environment are not the ones using AI most aggressively. They are the ones whose value was always upstream of execution.

I have watched this play out across teams. Two engineers, same company, same tools, same set of problems. One became dramatically more productive. The other produced more volume but not more value. The difference had nothing to do with how they used the AI tools. It had everything to do with how they thought about problems before the tools got involved.

The engineer who thrived was already judgment-first. She had always been spending most of her cognitive energy on what to build and why, not on the mechanics of how to build it. AI gave her execution capacity she did not have to burn mental energy on. The engineer who struggled had built his career on being good at the execution layer. With AI doing that layer, he was exposed.

AI did not make either of them. It revealed both of them.

The Engineers Who Thrive With AI Were Already Judgment-First

This is the part that makes the AI productivity conversation uncomfortable.

The companies reporting real gains from AI coding tools are not reporting that their whole team got better. They are reporting that the engineers who were already strong became significantly stronger. And some of those companies are quietly discovering that a portion of their engineering headcount was primarily executing, not thinking, and that AI can now handle much of what that headcount was doing.

This is not an AI problem. This is an org design revelation.

The roles most exposed are not the roles closest to code. They are the roles built around low-context execution: the engineer who never owns an outcome, only a ticket; the product manager who mainly writes requirements rather than discovers the problem behind the requirement; the QA engineer who writes test cases from specs without ever questioning whether the specs were right in the first place. These roles existed because execution was expensive and needed to be distributed across many people. When execution gets cheap, the overhead of distributing it becomes visible.

The roles that gain leverage are the ones built around judgment: the staff engineer who understands how systems fail at scale; the product manager who can identify the problem behind the problem; the engineering leader who can decompose a business outcome into the right technical approach. These people were already more valuable than the org chart suggested. AI is making their relative value dramatically clearer.

The uncomfortable part is that level does not predict which category you fall into. A senior engineer who owns a large codebase but only does what the PM specifies is execution. A junior engineer who pushes back on requirements, proposes alternatives, and takes ownership of outcomes is judgment. The titles are the same. The trajectories are not.

Which Parts of Your Org Chart Exist Because the Work Requires Judgment?

Here is the question most engineering leaders are avoiding.

Look at your team. Not at the titles, not at the performance reviews, but at the actual work each person is doing in a given week. How much of it requires genuine judgment: making a call that could go wrong, owning an outcome under uncertainty, deciding what should not be built? And how much of it is execution that you needed a human body to handle because, until recently, there was no other option?

That second category is not disappearing immediately. Reliability, integration, security, and governance are still genuinely hard in ways that current AI tools are not yet solving. The timeline here is years, not months. But the direction is clear: the work that survives human involvement over the next three to five years is the work that requires judgment, domain expertise, accountability, and the ability to operate under genuine ambiguity.

Microsoft's 2025 Work Trend Index found that 33 percent of leaders are considering headcount reductions while simultaneously adding AI-specific roles. That tension is not confusion. It is org redesign happening in real time. Fewer people doing commodity execution. More value expected from the people who remain. The title "software engineer" survives. The job underneath it changes substantially.

The WEF's 2025 Jobs Report still projects software developers among the fastest-growing roles through 2030. Growth and disruption are not mutually exclusive. More software gets built. With fewer people doing the parts that AI has absorbed. The people who remain are operating at a higher level of responsibility, or they are at risk of being the next layer of compression.

This is not the future I am predicting. It is the present I have seen taking shape across teams.

Build for Judgment, Not for Execution

If you are running an engineering team, there are a few practical conclusions to draw from this.

Stop measuring execution as a proxy for value. PR counts, story points, lines of code: these were always imperfect proxies, and AI just made them actively misleading. An engineer who closes 30 tickets with AI assistance and an engineer who closes 10 tickets by owning a complex system outcome are not comparable on any execution metric. The question is which work moved the product forward and which was velocity for its own sake. Most teams are measuring the second thing and calling it the first.

The engineers you want to retain and develop are the judgment-holders. Not the ones with the longest commit streaks or the fastest cycle times. The ones who say "we should not build this" and are usually right. The ones who read a messy business requirement and translate it into a system that actually solves the underlying problem, not the stated one. Those people are becoming more valuable, not less. Your performance framework should reflect that.

Think carefully about what your team structure was designed for. Most engineering org charts were designed for a world where execution was the bottleneck: enough people to write enough code to ship enough product. The org that outperforms in an AI-native environment looks different. Smaller core teams. Higher expectations per person. More emphasis on platform, reliability, and security, because when code gets generated faster, the cost of bad defaults rises. More tolerance for ambiguity at the individual contributor level, because the work that is left is the work that does not come with clear instructions.

None of this means AI is replacing engineers at scale. The evidence does not support mass displacement. What it supports is compression at the routine execution layer, expansion of scope for people who can handle ambiguity, and a significant shift in what "good" looks like in this profession.

The engineers who saw this coming were not the ones who learned to prompt AI better. They were the ones who always cared more about the problem than the code. They were already building software. The others were writing code, and in many environments, that was enough. It is not going to be enough for much longer.

AI did not change what matters in software engineering. It removed the scaffolding that had been hiding who actually had it.

I help engineering teams close the gap between "we use AI tools" and "AI actually changed how we deliver." Book a 20-minute call and I'll tell you where the leverage is.

Working on something similar?

I work with founders and engineering leaders who want to close the gap between what their technology can do and what it's actually delivering.